Measuring AI Productivity: Beyond Adoption Metrics

Other platforms track whether developers use AI tools. We track whether AI actually makes them more productive. Here's the difference—and why it matters.

Every DX platform now claims to measure “AI transformation.” But look closer at what they actually track:

- Binary adoption: Did someone use an AI tool? (Yes/No)

- Usage counts: How many times was AI invoked?

- Time spent: How long were AI tools active?

These are activity metrics, not productivity metrics. And there’s a massive difference.

The Research Says More Is Happening

Let’s start with what we actually know about AI coding tools. GitHub’s research found that developers using Copilot completed tasks 55.8% faster than those without it. That’s the headline number everyone cites.

But here’s what’s more interesting: 60-75% of users reported feeling more fulfilled with their job, less frustrated when coding, and able to focus on more satisfying work. 73% said Copilot helped them stay in flow. 87% said it preserved mental effort during repetitive tasks.

Those are the numbers that actually matter for team health—and almost nobody tracks them.

What We Don’t Know Is The Problem

A 2024 GitClear analysis found that AI-generated code has a 41% higher churn rate compared to human-written code. That means more revisions, more rework, more time spent fixing what the AI produced.

So which is it? 55% faster, or 41% more rework?

The answer is: it depends on the developer, the task, and the tool. And if you’re not measuring at the session level, you have no idea which scenario applies to your team.

The Questions That Actually Matter

What leaders need to know isn’t “how many people used AI” but:

1. Does AI Make Us Faster? Not adoption rates—actual cycle time reduction. Are AI-assisted PRs faster from start to merge?

2. Does AI Improve Quality? Are AI-assisted PRs better or worse than manual code? What’s the bug rate? Rework rate?

3. Which AI Tools Work Best For Us? You have Claude Code, Cursor, Copilot, Gemini. Which delivers the best ROI for your specific team?

4. Are Developers Getting Better at AI? Is the team’s AI productivity improving over time? Are they learning to use these tools effectively?

5. What’s the Actual ROI? Not a vendor’s promise—your measured productivity gain versus cost.

Most platforms can’t answer any of these questions. They can tell you that 80% of your developers used an AI tool this month. So what?

What You Should Be Tracking Instead

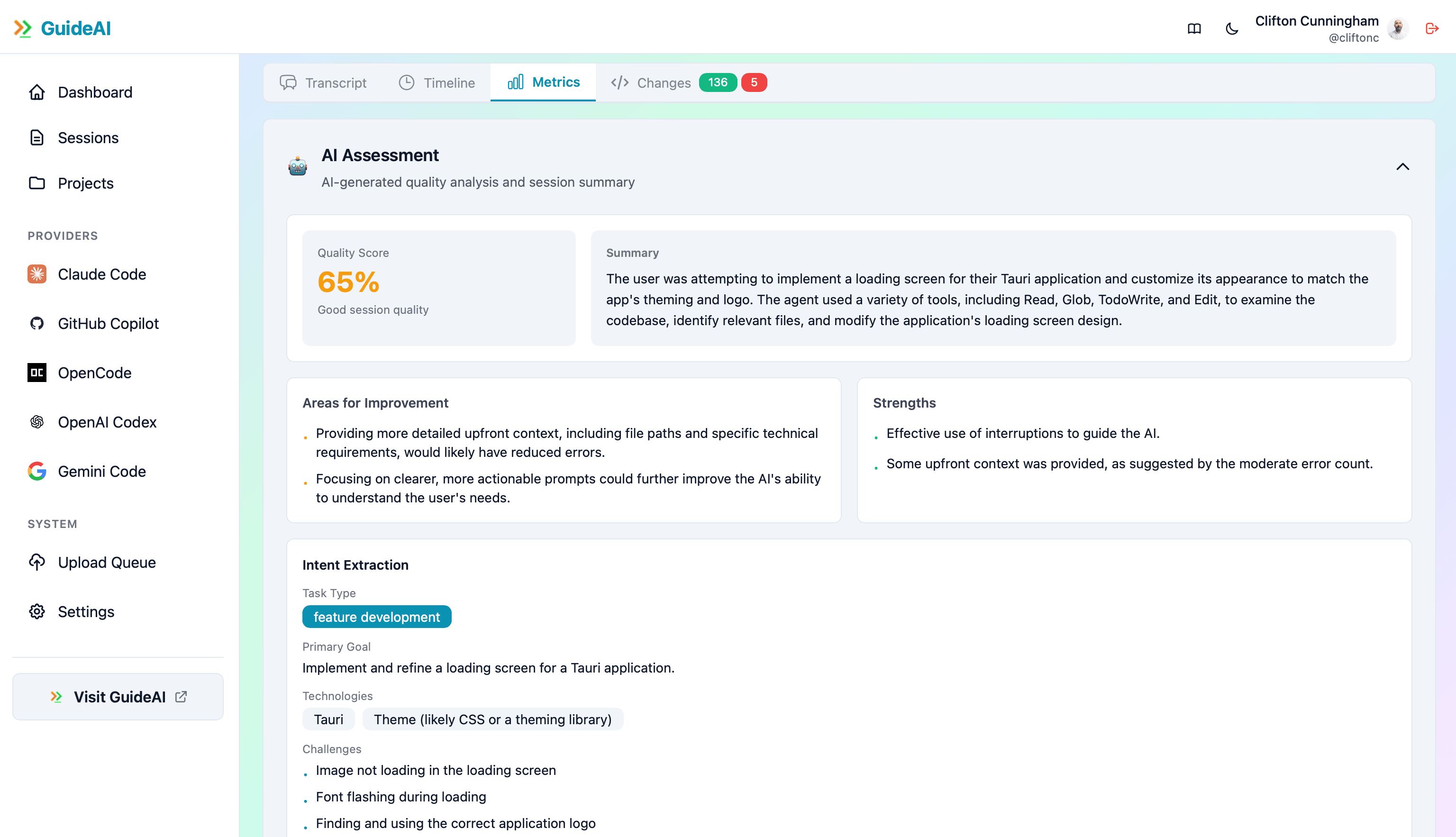

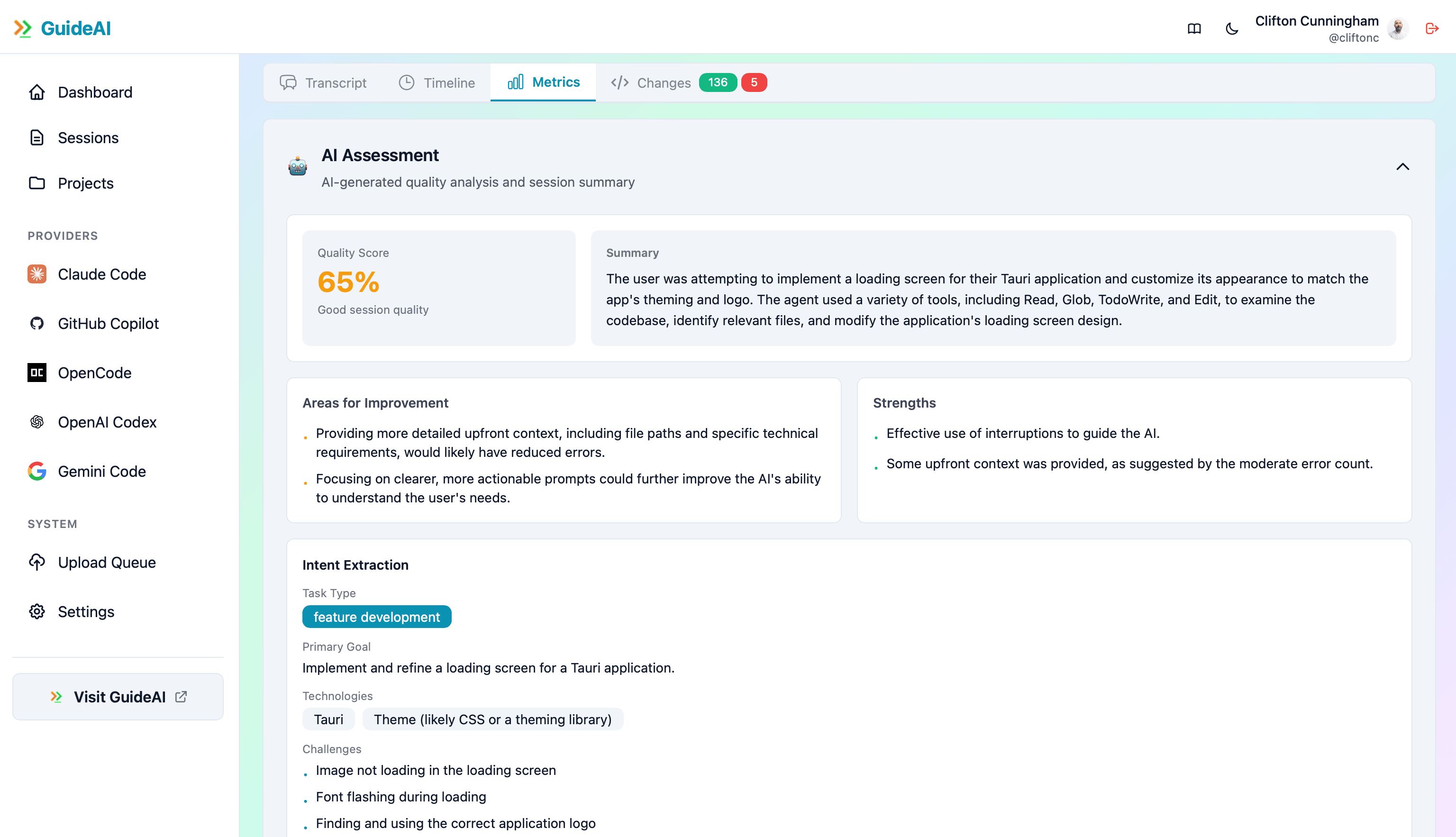

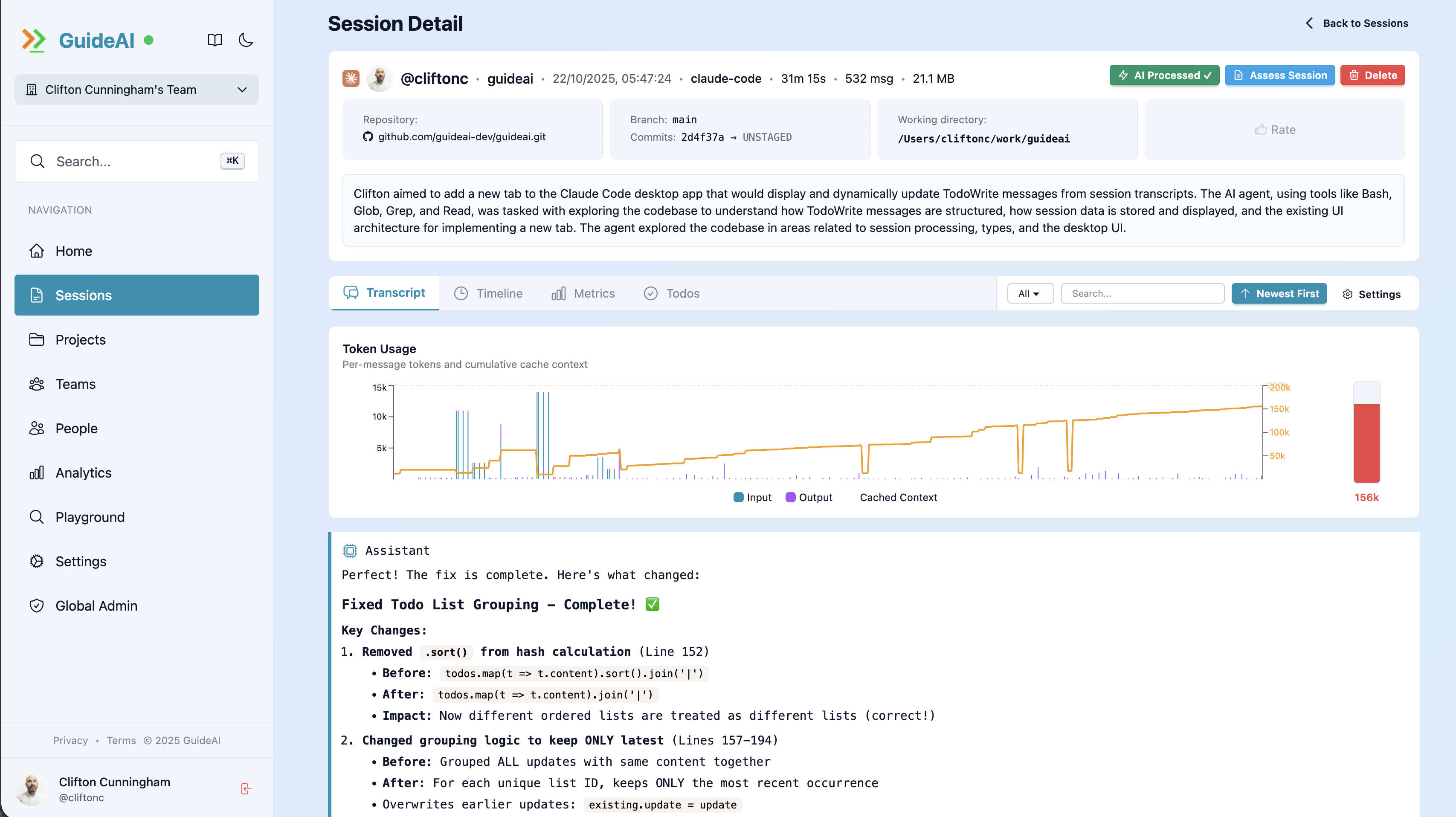

Session-Level Metrics

For every AI coding session, you need to understand:

Conversation Quality

- Message count and depth

- Context utilization (is the AI actually understanding the codebase?)

- Turn-taking patterns (back-and-forth vs. single-shot prompts)

Productivity Indicators

- Tasks completed per session

- Code output quality

- Goal achievement rate

- Developer satisfaction with the session

Tool Effectiveness

- Tool success rate by task type

- Error resolution speed

- Automation level achieved

Cross-Provider Comparison

If you’re paying for multiple AI tools (and most teams are), you need to compare them on the same metrics:

| Provider | Success Rate | LOC/Session | Task Completion |

|---|---|---|---|

| Claude Code | ? | ? | ? |

| Cursor | ? | ? | ? |

| GitHub Copilot | ? | ? | ? |

| Gemini | ? | ? | ? |

If you can’t fill in this table with real data from your team, you’re making licensing decisions blind.

Why Session-Level Matters

Here’s a real scenario. Microsoft research found it takes 11 weeks for users to fully realize the satisfaction and productivity gains of AI tools.

That’s nearly three months of learning curve. If you’re only tracking adoption, you’ll see usage spike, maybe dip, maybe plateau—and you’ll have no idea whether your developers are actually getting better at using these tools.

At the session level, you can see:

- Are early sessions struggling while later ones succeed?

- Which developers are getting ROI quickly vs. struggling?

- Which task types see the biggest improvements?

This is actionable data. Binary adoption is not.

The Improvement Curve

According to industry case studies from Accenture and Mercedes-Benz, AI tools can increase developer productivity by 30 minutes per day. But that’s an average across mature users.

New AI users don’t hit that number on day one. They need to learn:

- How to write effective prompts

- When to use AI vs. when to code manually

- How to validate and review AI output

- Which tasks are good AI candidates

Without session-level tracking, you can’t identify who needs coaching, what tasks work best for AI, or whether your investment is paying off.

Real ROI Measurement

Let me walk through what actually measuring AI productivity looks like:

Before measurement:

- “We use AI tools” (unknown impact)

- Multiple provider licenses (unknown relative value)

- No data on effectiveness by task type

- No visibility into learning curves

After 3 months of session-level tracking:

- 24% reduction in PR cycle time for AI-assisted work (measured)

- Claude Code: 85% success rate → Keep and expand

- Copilot: 71% success rate → Reevaluate or provide additional training

- Saved $12K/year by canceling underperforming tools

- Identified 12 developers who need AI coaching

- Found that refactoring tasks see 90%+ success rates while bug fixes see only 76%

That’s the difference between knowing you adopted AI and knowing whether it’s working.

The Satisfaction Component

Remember those GitHub research numbers about satisfaction? 60-75% feeling more fulfilled, 73% staying in flow?

Those are SPACE metrics—Satisfaction and Efficiency. If you’re implementing SPACE framework for developer productivity (you should be), AI experience is a critical dimension to measure.

A developer who loves their AI tool and uses it effectively is different from one who feels forced to use it and produces worse code as a result. The activity metric (both used AI) is identical. The outcome couldn’t be more different.

What About The Churn Problem?

The 41% higher churn rate for AI-generated code is concerning—but it’s also not the whole story.

The question isn’t whether AI code gets revised more. The question is: is the total time (generation + revision) still less than writing from scratch?

If AI helps you write a function in 5 minutes that takes 10 minutes to revise, you still saved 15 minutes compared to 30 minutes of manual coding. But if AI produces code that takes longer to fix than it would’ve taken to write, you’ve lost time.

You can’t answer this question without session-level tracking that follows code from AI generation through PR merge and beyond.

Practical Implementation

Here’s how to actually measure AI productivity:

1. Track at the session level Every AI coding session should capture: duration, messages, tool calls, code output, task completion, developer satisfaction.

2. Connect to delivery metrics Link AI sessions to PRs, to deployments, to production incidents. Does AI-assisted code have different outcomes?

3. Segment by provider Different tools, different results. Measure each one separately.

4. Segment by task type AI is great at some tasks, mediocre at others. Measure: new features, refactoring, bug fixes, tests, documentation.

5. Track over time Learning curves are real. Compare week-over-week and month-over-month trends.

6. Survey developers Quantitative metrics alone miss the experience. Are developers frustrated? Productive? Stuck? Ask them.

Beyond Binary Adoption

Stop settling for “are people using AI?” Start asking “is AI making us better?”

The difference between these questions is the difference between vendor marketing and actual engineering productivity. One tells you about tool adoption. The other tells you whether your investment is paying off.

The teams that will win with AI are the ones that measure actual outcomes, not just activity. They’ll know which tools work, which developers need help, and which tasks benefit most from AI assistance.

Everyone else will keep paying for tools they can’t prove are working.

Ready to Measure Real AI Productivity?

Track actual AI productivity with 119 session-level metrics across 5 providers. Compare tools, identify coaching opportunities, and measure real ROI.