The Only Platform Tracking

Individual AI Coding Sessions

119 session-level metrics across 5 AI providers. Measure what other platforms can't: actual AI productivity and effectiveness.

What Other Platforms Miss

Other DX platforms track AI "adoption" (did someone use an AI tool?). GuideMode tracks AI effectiveness (did the AI help or hurt productivity?).

Other Platforms

- ❌ "AI adoption rate" (binary: used or not)

- ❌ No session-level tracking

- ❌ No AI provider comparison

- ❌ No productivity metrics

- ❌ Can't answer: "Did AI make us faster?"

GuideMode

- ✓ 119 session-level metrics

- ✓ Full conversation tracking

- ✓ Compare 5 AI providers

- ✓ Plan mode & todo efficiency

- ✓ Answers: "Which AI makes us most productive?"

Track All Major AI Coding Assistants

Comprehensive session tracking across every major AI coding platform

Claude Code

Full session tracking with plan mode, todo lists, and git operations

Cursor

Complete interaction monitoring and conversation tracking

GitHub Copilot

Session analytics and code suggestion tracking

Gemini Code Assist

Full conversation and tool usage tracking

OpenCode

Session monitoring and analytics

Compare AI providers side-by-side to see which makes your team most productive

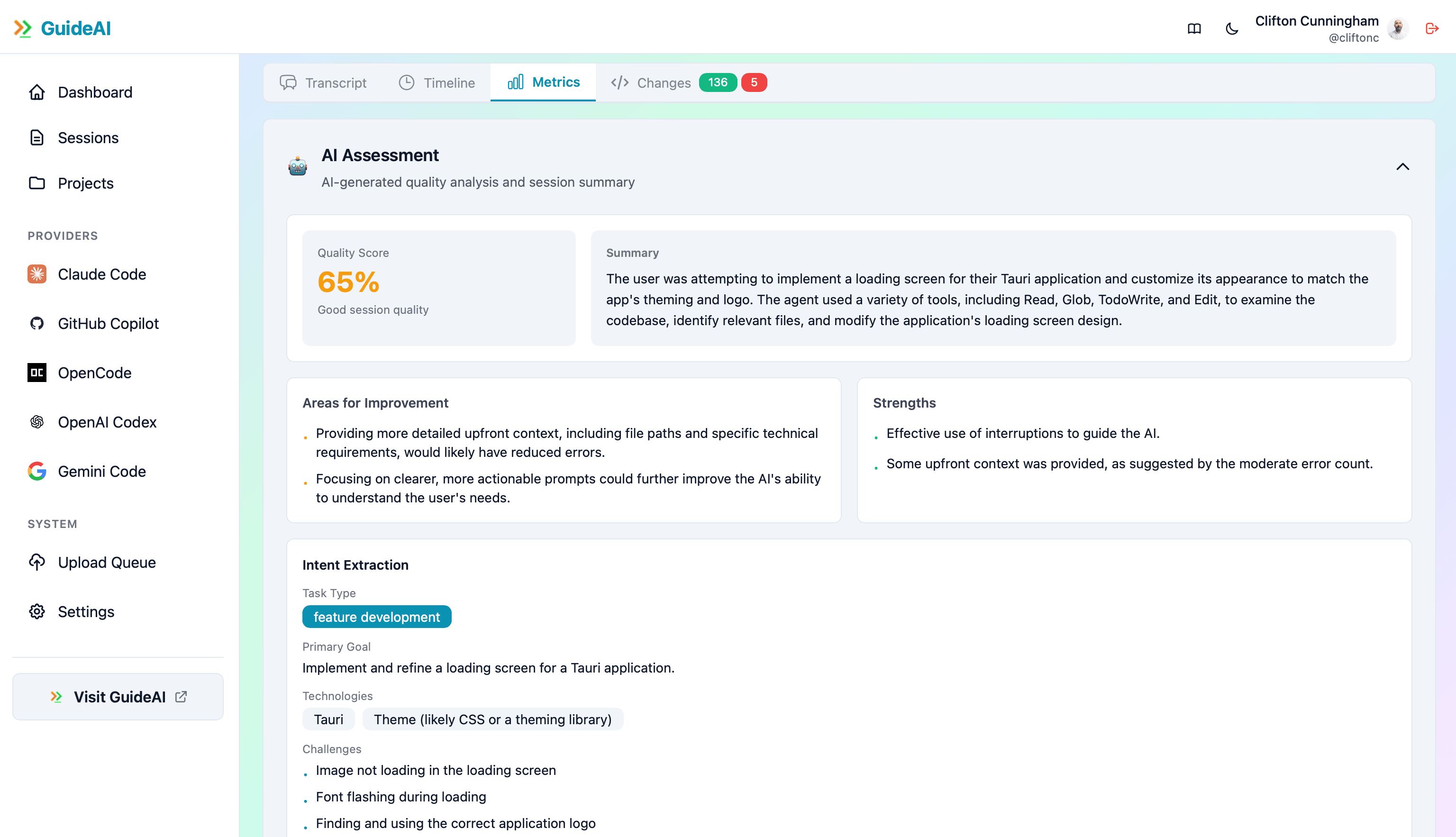

119 Session-Level Metrics

Deep insights into every AI coding session — from conversation quality to code output

Conversation Metrics

Message count, turn-taking, context length, token usage, conversation depth

Plan Mode Tracking

Plan mode usage, plan quality, implementation success rate, plan-to-code ratio

Todo List Analysis

Todo creation rate, completion rate, task breakdown quality, progress tracking

Git Operations

Commits per session, file change count, git efficiency ratio, commit message quality

Code Quality

Lines of code, file operations, edit efficiency, code review readiness

Productivity Metrics

Session duration, tasks completed, output quality, developer satisfaction

Session Success

Goal achievement, error resolution, code acceptance rate, user satisfaction

Tool Usage

Tool call frequency, tool success rate, tool diversity, automation level

Learning Velocity

Improvement over time, skill development, pattern recognition, efficiency gains

AI Productivity Cube

Dedicated analytics cube for AI productivity tracking with 7 dimensions and 6 measures

Dimensions

Measures

Example Analysis: "Which AI provider has the highest success rate for refactoring tasks on the backend team?"

Real Questions, Real Answers

AI session intelligence lets you answer questions other platforms can't

"Which AI provider makes our team most productive?"

Compare session success rates, task completion, and code output across Claude Code, Cursor, Copilot, Gemini, and OpenCode

"Is our AI productivity improving over time?"

Track learning velocity, session efficiency trends, and skill development month over month

"Does plan mode actually help?"

Compare success rates for sessions with vs without plan mode across different task types

"What's our actual ROI on AI coding tools?"

Measure productivity gains, time savings, and code quality improvements with hard data

"Which developers need AI coaching?"

Identify struggling developers and share best practices from high performers

"Are we using AI for the right tasks?"

Break down task categories by success rate to find AI's sweet spot

Privacy-First by Design

AI sessions are monitored locally on your machine by default. You control what (if anything) syncs to the cloud.

All tracking happens on your machine

Sync nothing, metrics only, or full sessions

Different privacy settings for each project

Two Ways to Get Started

Choose the setup that fits your workflow — full desktop app or a lightweight Claude Code plugin

Desktop App

Full-featured session monitoring

- + All 5 AI providers (Claude Code, Cursor, Copilot, Gemini, OpenCode)

- + Local analytics and session storage

- + Granular sync control (No Sync, Metrics Only, Full Sessions)

- + Per-project privacy settings

- + Background session watcher

Claude Code Plugin

Lightweight alternative for Claude Code users

- + No app to install — runs as a Claude Code hook

- + Zero background processes, minimal overhead

- + Configurable sync timing (per-response, session end, or both)

- + Auto-captures git metadata (branch, commit, remote)

- + Setup in seconds with

/guidemode-setup

Both options feed into the same GuideMode platform — same analytics, same dashboards, same team insights. The desktop app supports all 5 providers and can operate in completely private local-only mode. The Claude Code plugin always uploads full session transcripts to GuideMode.

Start Tracking AI Productivity

The only platform measuring actual AI effectiveness, not just adoption. Get insights other platforms can't provide.