The Discovery Gap: What Other DX Platforms Miss

Traditional DX platforms measure how fast you ship. But they completely miss whether you're shipping the right things. Here's what they're missing—and why it matters.

Here’s a scenario that plays out constantly:

Your team has great DORA metrics. You deploy 10 times per day. Lead time is under an hour. Change failure rate is impressively low.

Yet customer satisfaction is dropping. Features get built but don’t get used. The product feels directionless. Engineers are frustrated that their work doesn’t seem to matter.

What’s going wrong?

You’re optimising for delivery speed without validating what you’re delivering. You’ve closed the delivery gap but completely ignored the discovery gap.

The Missing 40%

Most software development follows this pattern:

- Discovery (30-40% of time): Research, validation, planning

- Delivery (60-70% of time): Building, testing, deploying

Traditional DX platforms only measure step 2.

That’s a huge blind spot. You’re paying for tools that measure less than two-thirds of your team’s work—and arguably not the most important part.

What Continuous Discovery Actually Means

Teresa Torres, who’s coached hundreds of product teams and literally wrote the book on this (Continuous Discovery Habits), defines good discovery as:

“Weekly touchpoints with customers by the team building the product, where they conduct small research activities in pursuit of a desired outcome.”

That’s the standard. Weekly customer contact. By the actual team—not just a PM or researcher. Small, continuous research activities.

How many teams actually do this? How would you even know if your teams do this?

If you’re not measuring discovery, you’re flying blind on something that directly predicts product success.

What Happens During Discovery

During discovery, teams should be:

Talking to Customers

- User interviews (weekly, ideally)

- Feedback sessions on prototypes

- Beta testing with real users

- Support ticket analysis for patterns

Validating Hypotheses

Torres recommends using Opportunity Solution Trees—mapping business outcomes to opportunities (customer needs) to potential solutions. But the key is validation: testing assumptions before building.

Her framework identifies five categories of assumptions to test:

- Desirability: Do customers actually want this?

- Usability: Can they figure out how to use it?

- Feasibility: Can we actually build it?

- Viability: Does the business case work?

- Ethical: Is there potential harm?

Making Decisions

- Prioritizing based on evidence, not opinions

- Killing ideas that don’t validate (this is success, not failure)

- Refining requirements based on what you learned

- Planning implementation with confidence

None of this shows up in DORA metrics. Not one line of it.

The Real Cost of Skipping Discovery

When teams don’t measure (and therefore don’t prioritise) discovery:

They Build the Wrong Things

Without validation, you’re guessing. Research shows that most product ideas don’t work—the exact failure rate varies by study, but it’s consistently high. If you’re not testing assumptions, you’re just hoping you’re in the lucky minority.

They Waste Massive Resources

Building features nobody wants is expensive. Not just the development time—though that’s significant. Also:

- Design iterations on the wrong concept

- Code reviews that approve the wrong solution

- Testing for features that shouldn’t exist

- Documentation nobody will read

- Maintenance burden forever

- Support for confused users

They Accumulate Bad Technical Debt

Features built on unvalidated assumptions often need major rewrites when reality hits. The initial implementation was based on wrong mental models—so even if the code is “clean,” it’s solving the wrong problem.

They Destroy Team Morale

This one’s underrated. Engineers, designers, and PMs all feel demoralized when their work gets ignored or scrapped. High delivery velocity with low feature adoption is a recipe for burnout and attrition.

The AI Amplification Problem

This matters even more with AI coding tools.

When AI can accelerate code generation by 50-60%, you can build wrong things faster than ever. Teresa Torres recently warned that AI summaries can miss 20-40% of important detail when synthesizing customer research.

Speed without direction is just expensive noise.

If your discovery process is weak, AI doesn’t fix it—AI accelerates the waste.

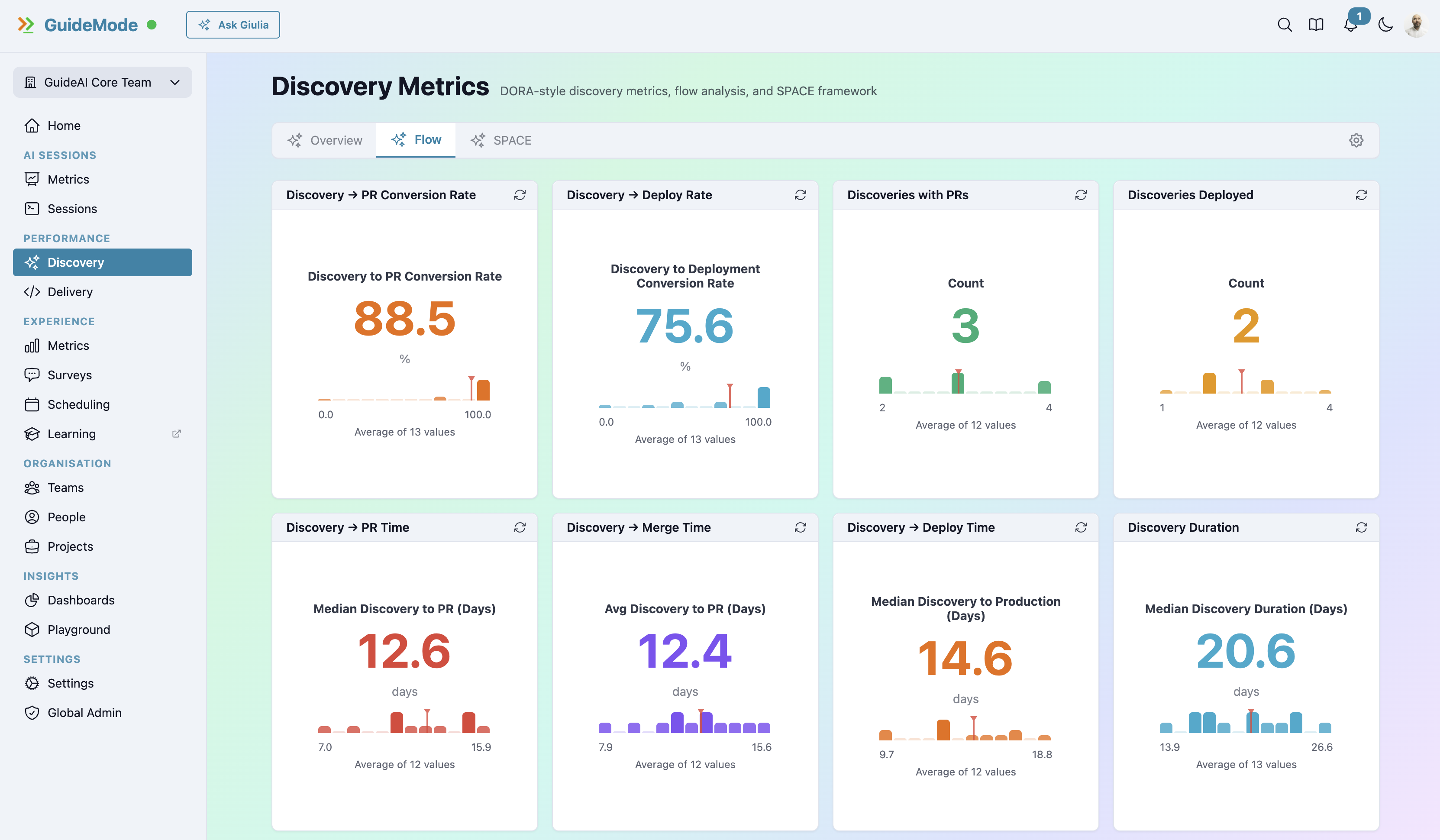

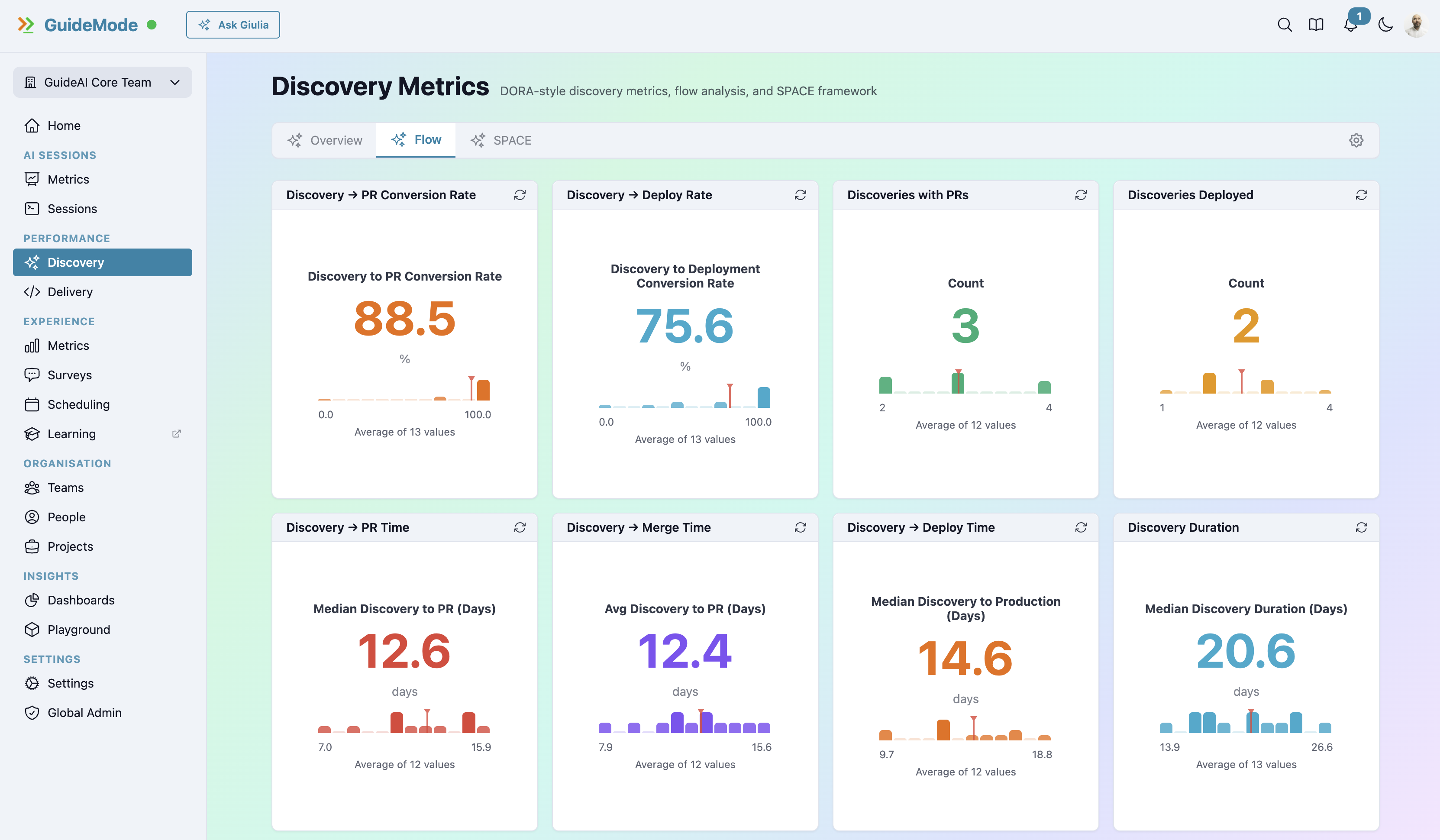

What Discovery Metrics Look Like

Here’s what you should be measuring:

Customer Touchpoint Frequency

How often do teams actually talk to customers? Weekly is the standard. Monthly is struggling. Never is failing.

Track this by team. Compare against feature success rates. You’ll find patterns.

Validation Rate

What percentage of hypotheses survive testing? This isn’t about high numbers—sometimes killing ideas is exactly right. But you need visibility into whether validation is happening at all.

Learning Velocity

How quickly are teams invalidating bad ideas? Fast failure is good—it saves resources. Slow discovery cycles mean slow learning.

Discovery Satisfaction (SPACE Surveys)

Are teams happy with the discovery process? Do they have enough time for research? Are they confident in their validations? Is cross-functional collaboration working?

Research Quality Indicators

- Are customer insights documented and shared?

- Do teams use opportunity solution trees or similar frameworks?

- How many assumptions are tested before building begins?

Connecting Discovery to Delivery

The real power comes from connecting both halves:

| Discovery Metric | Delivery Impact |

|---|---|

| Higher customer touchpoints | Higher feature adoption |

| Better validation rates | Less rework |

| Faster learning velocity | Shorter overall cycle time |

| Higher discovery satisfaction | Faster delivery (teams know what to build) |

These correlations only become visible when you measure both sides.

The Comparison

Other platforms tell you:

- “You deployed 47 times this week”

- “Your lead time is 2.3 hours”

With discovery metrics, you can see:

- “You deployed 47 times, but only 12 features had validated discovery”

- “Your lead time is 2.3 hours, and validated features ship 40% faster”

That’s a completely different level of insight.

Getting Started

You don’t need to transform your process overnight. Start with:

1. Track Customer Touchpoints Even informally—how many customer conversations did each team have this week? Just counting creates awareness.

2. Ask About Discovery Satisfaction Add discovery-focused questions to your developer surveys. Are teams confident in their validations? Do they have time for research?

3. Connect Features to Validation Start tagging: was this feature validated before building? Track adoption rates for validated vs. unvalidated features.

4. Measure the Complete Cycle Don’t stop at “time to deploy.” Measure time from insight to impact. That’s the real cycle time.

The Discovery Triad

If you’re serious about this, Torres recommends the product trio approach: Product Manager, Designer, and Engineer working together on discovery—not just delivery.

That means engineers in customer interviews. Designers validating assumptions. PMs with hands-on research, not just requirements docs.

This is a different way of working for many teams. Measuring discovery makes it visible—and creates accountability for doing it well.

Ready to Close the Discovery Gap?

Stop flying blind on 40% of your team’s work. Measure discovery alongside delivery for the complete picture of product development effectiveness.