Qualitative + Quantitative: The Complete DX Picture

Hard metrics tell you what happened. Qualitative data tells you why. You need both—here's why most teams get this wrong.

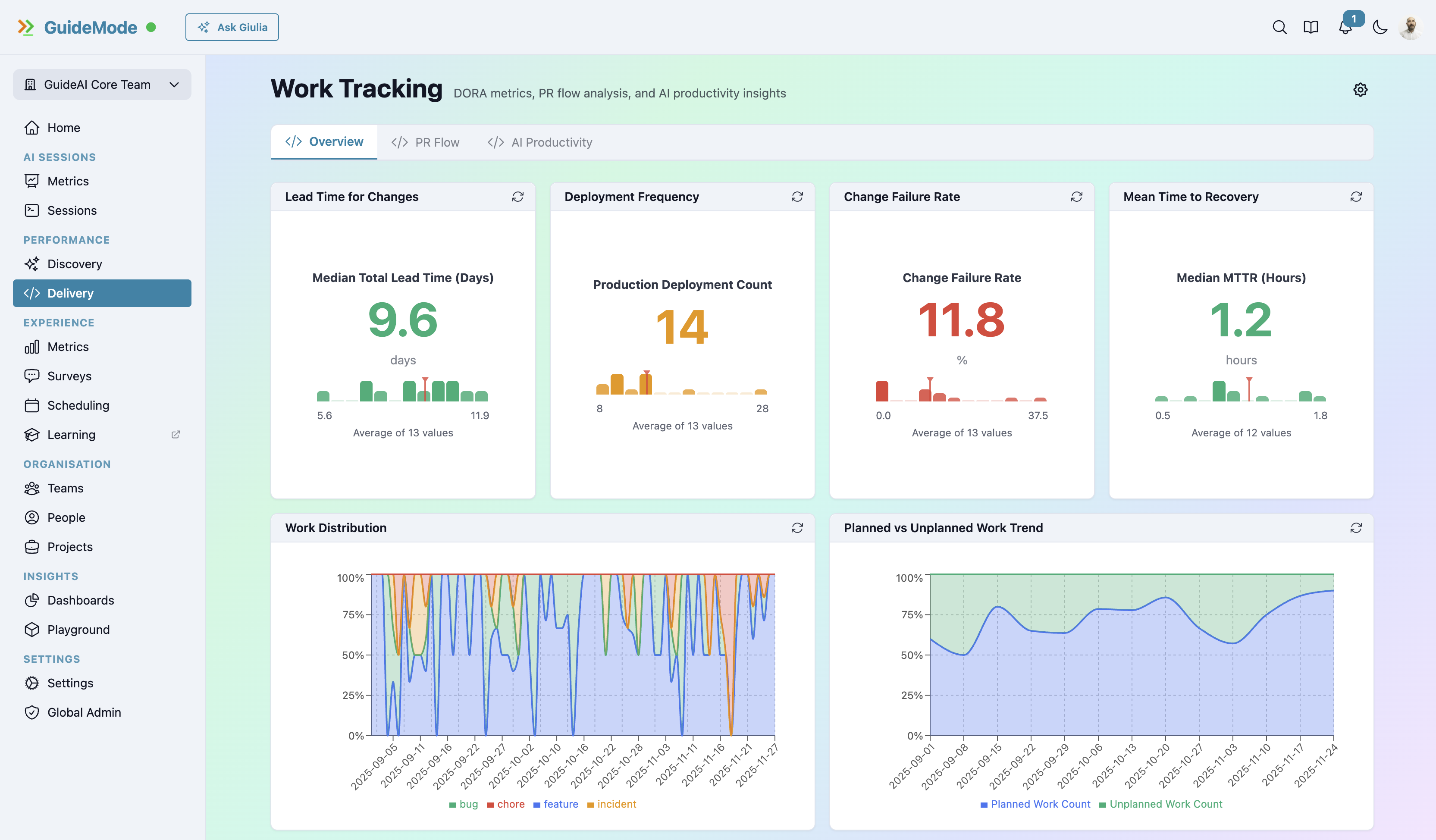

Your DORA metrics look great:

- Deployment frequency: 15/day ✓

- Lead time: 1.2 hours ✓

- Change failure rate: 8% ✓

- MTTR: 45 minutes ✓

But developers are unhappy. Attrition is up. The velocity feels unsustainable.

What’s going on?

The Problem with Numbers Alone

Hard metrics tell you what is happening. They don’t tell you why or how it feels.

This is a fundamental limitation of quantitative measurement. You can see that PRs take 4 hours to merge, but you can’t see:

- Is 4 hours too long because of process issues?

- Is it too long because of review bottlenecks?

- Is it actually fine because the reviews are thorough and valuable?

- Are developers frustrated with it or do they appreciate it?

The number alone is meaningless without context.

Research Backs This Up

The SPACE framework research from GitHub, Microsoft, and University of Victoria explicitly addresses this. Nicole Forsgren and colleagues found that productivity measurement requires both:

- System-level metrics (deployments, cycle times, failure rates)

- Perception-level data (surveys, self-assessment, satisfaction)

Neither alone tells the complete story. As the researchers noted, “the perceptions of developers are an especially important dimension to capture.”

Why? Because a team hitting great numbers while burning out is not a successful team—it’s a ticking time bomb.

What Quantitative Measures

Quantitative metrics measure outcomes:

Delivery Metrics:

- Deployment frequency and stability

- PR cycle time and throughput

- Lead time for changes

- Change failure rate

Activity Metrics:

- Commits, PRs, issues closed

- AI session counts and duration

- Code output volume

Quality Metrics:

- Bug escape rates

- Rework rates

- Test coverage

These are important. They’re objective. They’re comparable. But they’re incomplete.

What Qualitative Captures

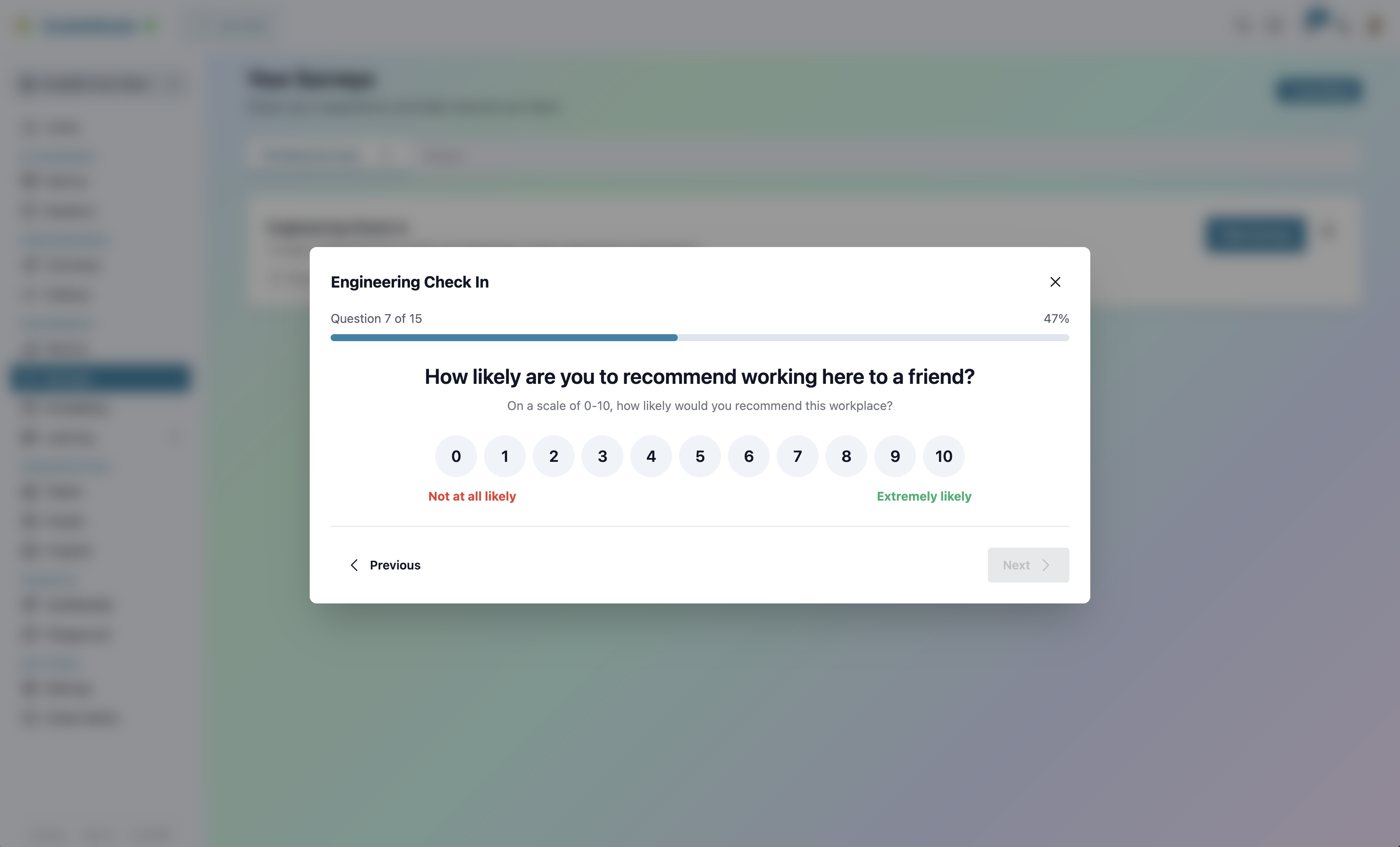

Qualitative data—surveys, interviews, self-assessment—captures what numbers miss:

The “Why” Behind Numbers:

- Why do PRs take that long? (Process? Reviews? Requirements?)

- Why is deployment frequency high? (Good automation? Pressure? Small changes?)

- Why is the bug rate what it is? (Testing practices? Time pressure? Skill gaps?)

The Sustainability Question:

- Is this pace maintainable?

- Are people burning out?

- Would developers recommend working here?

The Improvement Opportunities:

- What would make things better?

- Where is friction hiding?

- What’s working that should be protected?

Connecting the Dots

The power comes from correlating quantitative and qualitative. Here are real scenarios:

Scenario 1: Fast but Unsustainable

Quantitative:

- Deployment frequency: 18/day (HIGH)

- Lead time: 0.9 hours (excellent)

- PR review time: 12 minutes (excellent)

Qualitative:

- Developer satisfaction: 3.2/10 (LOW)

- Sustainable pace: 2.1/10 (LOW)

- Burnout indicators: 8.7/10 (HIGH)

What’s Happening: The numbers look amazing. Leadership is probably celebrating these metrics.

But the team is burning out. The speed is real, but it’s unsustainable. If you only looked at quantitative data, you’d think everything was fine. If you only looked at qualitative, you’d wonder why people are unhappy when “metrics are good.”

Together, the picture is clear: slow down before attrition hits.

Scenario 2: Slow but Healthy

Quantitative:

- Lead time: 4.2 hours (above average)

- PR review time: 2.1 hours (above average)

- Code quality metrics: 8.1/10 (excellent)

Qualitative:

- Developer satisfaction: 7.8/10 (HIGH)

- Learning from reviews: 8.9/10 (HIGH)

- Process satisfaction: 7.2/10 (GOOD)

What’s Happening: The numbers suggest “inefficiency.” Lead time and review time are higher than benchmarks.

But developers love it. They’re learning from thorough reviews. Code quality is exceptional. Satisfaction is high.

This team doesn’t need “optimization.” They’ve found a balance that works. Speeding them up would likely hurt quality and satisfaction.

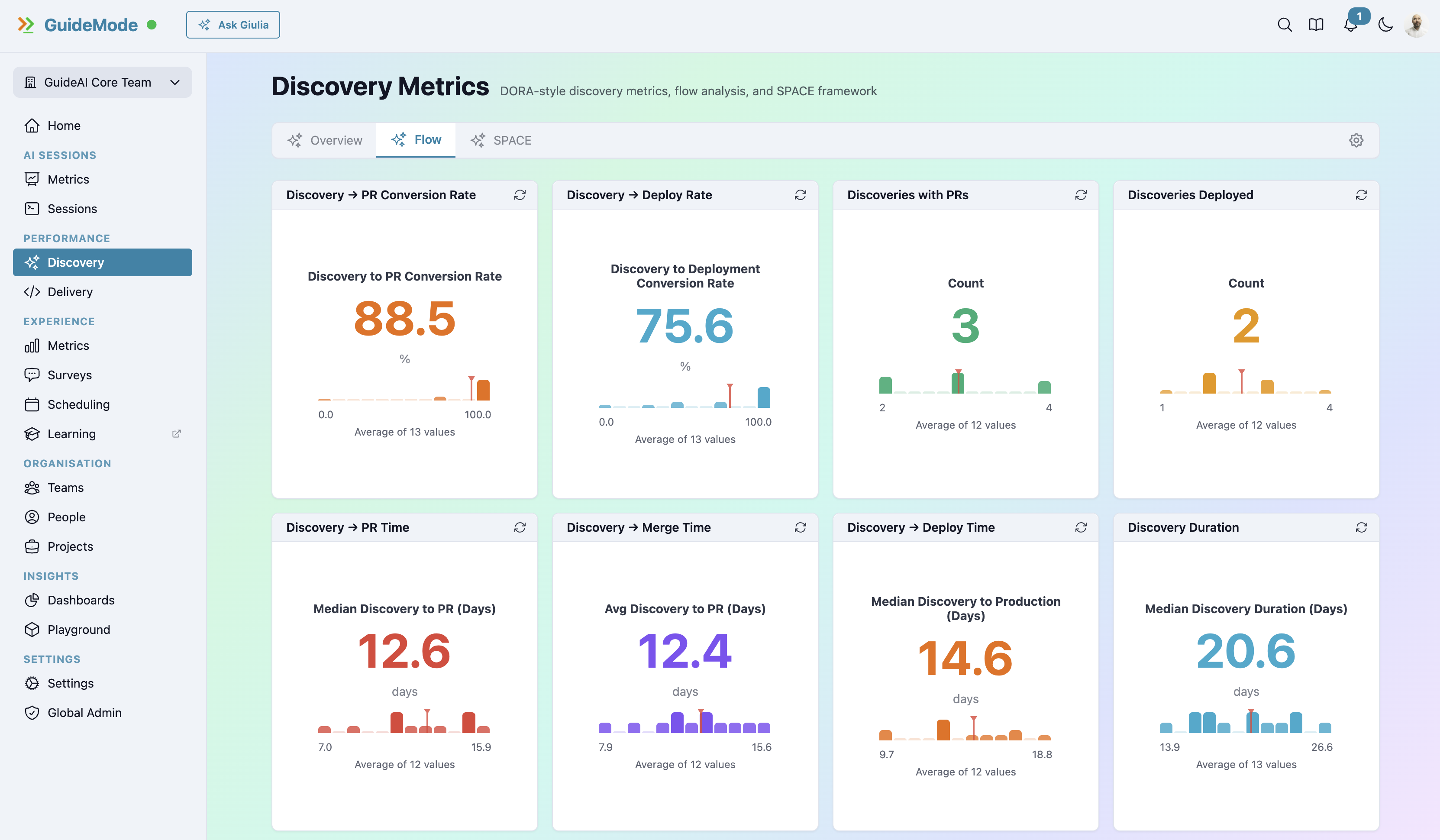

Scenario 3: Discovery Failure

Quantitative:

- Features shipped: 23 (HIGH)

- Deployment success: 96% (HIGH)

- Customer adoption: 31% (LOW)

Qualitative:

- Discovery satisfaction: 4.1/10 (LOW)

- Customer interaction frequency: 2.3/10 (LOW)

- Validation confidence: 3.8/10 (LOW)

What’s Happening: Delivery machine is working perfectly. Shipping fast, deployments stable.

But nobody’s using the features. The qualitative data explains why: teams aren’t confident in their validations, they’re not talking to customers, discovery process feels broken.

The problem isn’t delivery—it’s discovery. Quantitative alone would miss this entirely.

The SPACE Framework Approach

The SPACE framework was designed specifically to address this quantitative-qualitative gap. It measures across five dimensions, each requiring both types of data:

Satisfaction (primarily qualitative):

- Job satisfaction surveys

- Process satisfaction

- Tool satisfaction

- Burnout indicators

Performance (mixed):

- Objective quality metrics + self-assessed impact

- Customer outcomes + perception of success

Activity (primarily quantitative):

- Deployments, PRs, commits

- Contextualised by self-reported work distribution

Communication (mixed):

- Review turnaround + collaboration quality surveys

- Meeting time + self-assessed effectiveness

Efficiency (mixed):

- Cycle times + flow state frequency

- Wait times + interruption perception

This multi-dimensional approach forces you to look at both data types together.

Common Mismatches (And What They Mean)

Here’s a quick reference for interpreting qual/quant disconnects:

| Quantitative | Qualitative | Likely Reality |

|---|---|---|

| High velocity | Low satisfaction | Unsustainable pace, burnout risk |

| Low velocity | High satisfaction | Quality-focused, may be healthy |

| High activity | Low impact perception | Building wrong things |

| Low activity | High impact perception | Efficient, focused work |

| Fast reviews | Low collaboration scores | Rubber-stamping, not reviewing |

| Slow reviews | High learning scores | Thorough mentorship |

None of these patterns are visible from either data type alone.

Practical Implementation

How do you actually combine qualitative and quantitative measurement?

1. Start with Quantitative Baseline Identify outliers and trends in hard metrics. What looks unusual? What’s changing?

2. Add Qualitative Context Survey developers regularly (monthly for pulse surveys, quarterly for comprehensive SPACE). Ask about the numbers you’re seeing.

3. Correlate Patterns Look for disconnects. Great metrics with low satisfaction? Low metrics with high satisfaction? These mismatches reveal insights.

4. Act on Combined Insights Don’t optimise for numbers alone. Don’t act on sentiment alone. Use both.

5. Track Both Over Time Measure whether interventions improve both metrics AND sentiment. If you speed up cycle time but satisfaction drops, you haven’t improved—you’ve traded.

The Danger of Only One

Quantitative Only:

- Can optimise for wrong outcomes

- Miss morale and retention risks

- Don’t understand root causes

- Can’t predict attrition

- May celebrate unsustainable success

Qualitative Only:

- Can’t prove impact objectively

- May miss real problems that feel fine

- Hard to track progress

- Susceptible to recency bias

- Difficult to compare across teams

Together: Complete picture. Context plus data. Outcomes plus sustainability.

The AI Angle

This matters especially for AI tool measurement. Consider this scenario:

Quantitative:

- AI session success rate: 68%

- Code output per session: Below non-AI average

- Bug rate: Slightly higher for AI-assisted code

If you only looked at numbers, you’d question the AI investment.

Qualitative:

- AI satisfaction: 8.9/10

- Learning value: 9.2/10

- Would recommend: 9.4/10

Developers love it. They’re learning. They see value.

The Complete Picture: AI isn’t (yet) making them faster, but it’s accelerating their learning curve. Research shows it takes 11 weeks to fully realize AI productivity gains. The qualitative data suggests you’re on the right track—keep measuring, expect the quantitative to improve.

Without both data types, you’d make the wrong decision.

Ready for the Complete Picture?

Stop choosing between data and developer voice. Combine quantitative metrics with qualitative surveys for insights neither can provide alone.