Introducing DX²: Why Discovery + Delivery Matters

Most DX platforms only measure delivery. Here's why that's a problem—and how measuring both Discovery and Delivery gives you the complete picture.

If you’ve looked at developer experience (DX) platforms lately, you’ve probably noticed they all focus on the same thing: delivery metrics.

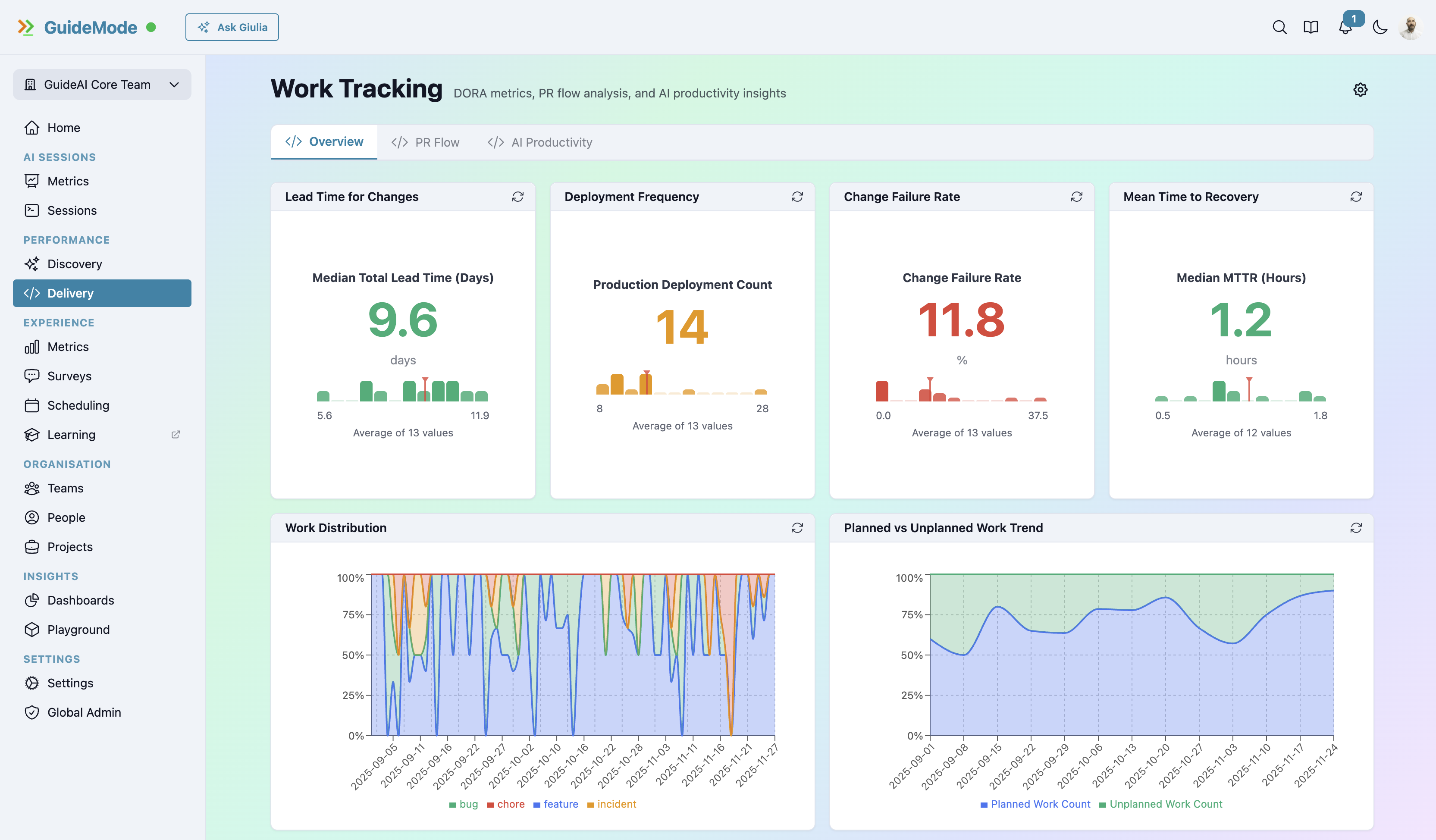

DORA metrics. Deployment frequency. Lead time for changes. Mean time to restore. Change failure rate.

These are important metrics. But here’s what nobody talks about: they only tell half the story.

The Discovery Problem

Before you can deliver great software, you need to discover what to build. That’s not a small thing—most teams spend 30-40% of their time on discovery activities. Research, validation, customer interviews, prototype testing, hypothesis checking.

Yet zero DX platforms measure this work.

Think about what that means. You can optimise your deployment pipeline to near-perfection, hit elite DORA numbers, ship code faster than ever—and still fail completely because you’re building the wrong thing.

Fast delivery of the wrong thing is just expensive waste.

The Double Diamond

The product world figured this out years ago. The Double Diamond model, developed by the British Design Council in 2005, visualizes the complete product development process as two diamonds:

First Diamond (Discovery):

- Discover: Research, customer interviews, understanding the problem space

- Define: Synthesize insights into a clear problem statement

Second Diamond (Delivery):

- Develop: Ideate and prototype solutions

- Deliver: Build, test, and ship

Maze’s breakdown of the process explains it well: the first diamond is about divergent then convergent thinking on the problem. The second is divergent then convergent thinking on the solution.

Current DX platforms only measure the second diamond. They’re completely blind to everything that happens before.

Dual Track Agile

If you’re running agile teams, you’ve probably encountered Dual Track Agile—the methodology of running discovery and delivery in parallel.

The concept goes back to Lynn Miller’s 2005 paper about “interconnected parallel design and development tracks,” later refined by Marty Cagan and Jeff Patton into “dual-track scrum.”

The key insight: discovery and delivery aren’t sequential phases. They run in parallel, with the outputs of discovery becoming the inputs of delivery.

Most teams already work this way. But they only measure half of it.

DX²: Discovery × Delivery

We call our approach DX² (DX-squared). The formula is simple:

DX² = Discovery × DeliveryWhy multiply? Because neither dimension matters in isolation:

- Fast delivery of the wrong thing = waste

- Great discovery without delivery = ideas that never ship

- High DORA scores + building the wrong features = impressive vanity metrics

You need both, measured together, to understand true productivity.

Who Does What

Discovery is trio work:

The discovery triad—Product Manager, Designer, and Engineer—works together on research and validation:

- Product Manager leads research and customer discovery

- Designer validates UX hypotheses and prototypes

- Engineer validates technical feasibility

- Together, they learn what to build

Delivery is engineering-focused:

Once you know what to build, engineering takes the lead:

- Build, test, and deploy validated features

- DORA metrics, PR analytics, and flow state tracking

- Code quality and deployment velocity

Both phases have measurable activities. Only one currently gets measured.

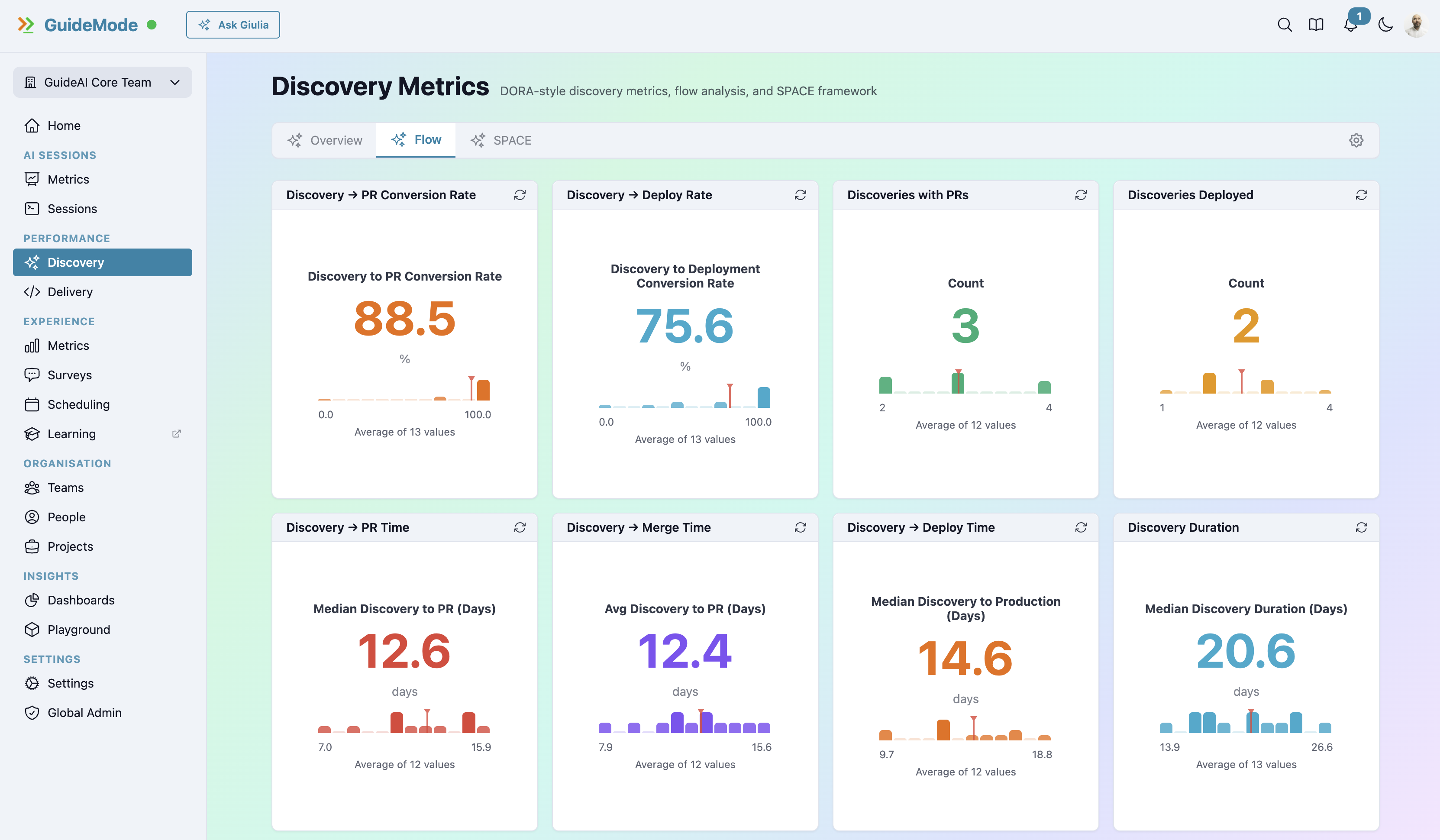

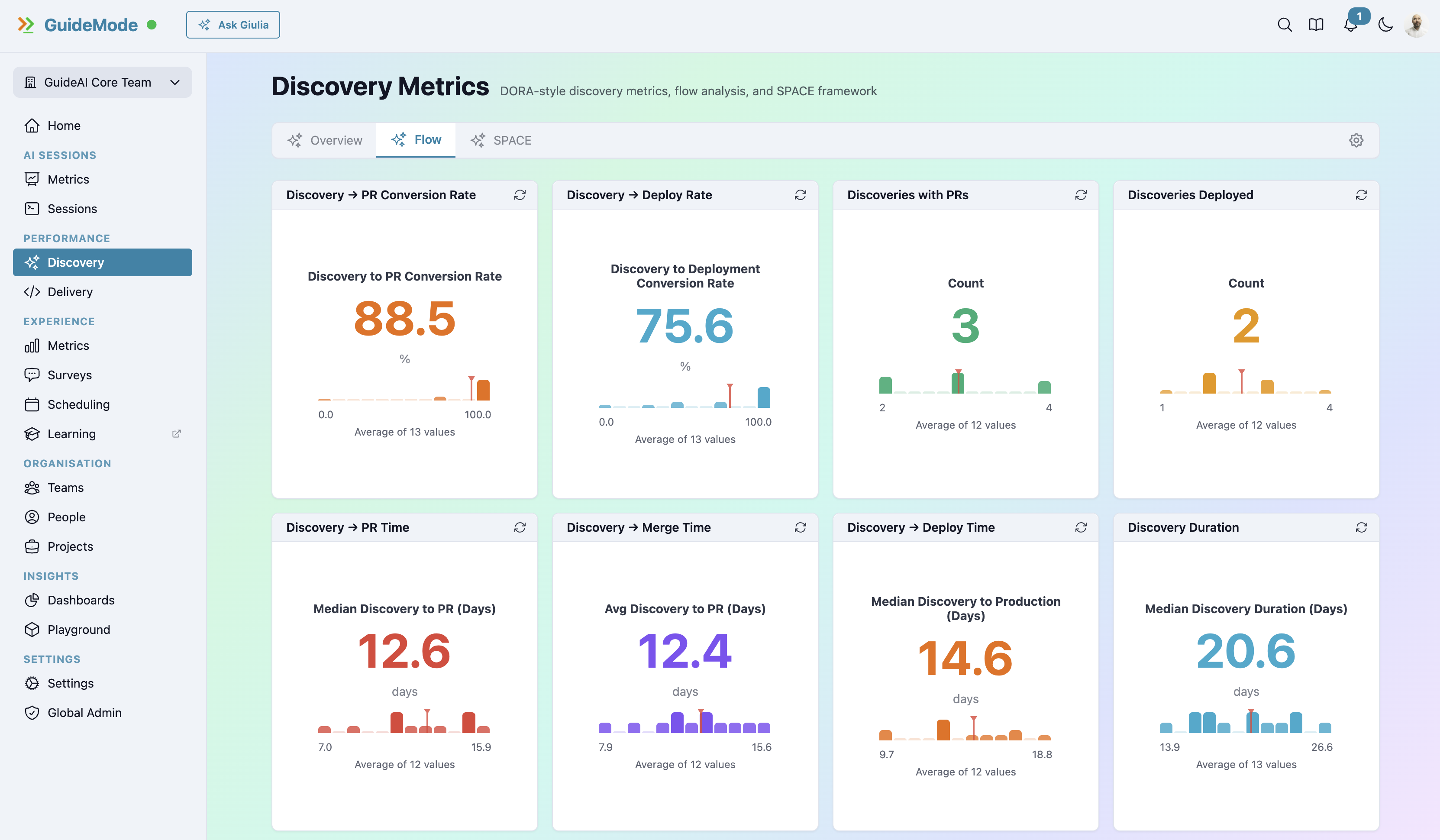

What Discovery Metrics Look Like

GuideMode tracks discovery through several dimensions:

Research Validation Tracking

- Hypotheses tested per sprint/cycle

- Validation rate (what percentage of hypotheses hold up?)

- Learning velocity (how quickly do you invalidate bad ideas?)

- Customer touchpoint frequency

Discovery Satisfaction (SPACE Surveys)

Research-backed surveys measuring team Satisfaction, Performance, Activity, Communication, and Efficiency during discovery. These aren’t generic “how happy are you” questions—they’re specifically designed for research and validation work.

- Are teams happy with the research process?

- Do they have enough time for discovery?

- Are they confident in their validations?

- Is cross-functional collaboration working?

Discovery-to-Delivery Correlation

This is where it gets interesting. By measuring both phases, you can answer questions like:

- Which validated discoveries lead to successful deployments?

- What’s the cycle time from research hypothesis to production feature?

- Do faster discovery cycles correlate with better delivery outcomes?

- How does research quality affect code quality?

The Connection Problem

Here’s a common scenario. A team has great DORA metrics—they’re shipping fast, deployments are reliable, cycle times are down. Leadership is happy.

But feature adoption is terrible. Nobody’s using the new features. Support tickets are up. Customers are complaining.

What happened? The delivery machine is working perfectly. The discovery process failed.

With DX² measurement, you can trace this:

- Were the features properly validated before development?

- How many customer touchpoints happened during discovery?

- What was the hypothesis validation rate?

- Did the discovery triad have enough time and resources?

Without discovery metrics, you’re debugging with half the data.

Why This Matters Now

The rise of AI coding tools makes discovery even more critical.

When AI can accelerate code generation by 50-60%, delivery bottlenecks shift. You can build faster than ever—which means you can waste resources faster than ever if you’re building the wrong things.

As Productboard notes: “The output of discovery work is input to the delivery track.” If discovery quality drops, delivery speed just amplifies the problems.

The DX² Advantage

By measuring both Discovery and Delivery, you can:

1. Prevent Waste Early Catch bad ideas before they become bad code. A failed hypothesis in discovery costs hours. A failed feature in production costs weeks.

2. Optimise the Complete Cycle End-to-end traceability from research insight to production deployment. See where your actual bottlenecks are—they might not be where you think.

3. Balance Roles and Resources Are engineers spending too much time in delivery and not enough in discovery? Is the discovery triad under-resourced? You can’t answer this without measuring both sides.

4. Make Better Investment Decisions Data-driven insights on the complete process. Not just “are we shipping fast” but “are we shipping the right things fast.”

The Comparison

| Capability | Other DX Platforms | GuideMode (DX²) |

|---|---|---|

| DORA Metrics | ✓ | ✓ |

| Deployment Tracking | ✓ | ✓ |

| PR Analytics | Some | ✓ (48 measures) |

| Discovery Metrics | ❌ | ✓ |

| SPACE Surveys | ❌ | ✓ |

| Research Validation | ❌ | ✓ |

| End-to-End Traceability | ❌ | ✓ |

| Discovery Satisfaction | ❌ | ✓ |

The gap isn’t subtle. Current platforms are measuring one diamond while ignoring the other entirely.

Getting Started

The good news: you don’t need to overhaul your process to start measuring discovery. Start by:

- Survey your discovery triad monthly on satisfaction and effectiveness

- Track hypothesis validation rates—even informally, start counting what you validate and invalidate

- Measure customer touchpoints during discovery phases

- Connect discovery to delivery outcomes—which validated ideas become successful features?

Once you have both halves of the picture, patterns emerge that you couldn’t see before.

Ready to Measure the Complete Picture?

DX² gives you visibility across both Discovery and Delivery—the only way to understand true team productivity.